In image-guided surgery, the surgeon must map pre-operative patient images from the navigation system to the patient on operating room (OR) table in order to understand the topology and locations of the anatomy of interest below the visible surface. This type of spatial mapping is not trivial, is time-consuming, and may be prone to error. Using augmented reality (AR) we can register the microscope/camera image to pre-operative patient data in order to aid the surgeon in understanding the topology, the location, and type of vessel lying below the surface of the patient. This may reduce surgical time and increasing surgical precision.

Projects in this area include:

Augmented Reality in Neurovascular Surgery

In neurovascular surgery, and in particular surgery for arteriovenous malformations (AVMs), the surgeon must map pre-operative images of the patient to the patient on operating room (OR) table in order to understand the topology and locations of vessels below the visible surface. This type of spatial mapping is not trivial, is time consuming, and may be prone to error. Using augmented reality (AR) we can register the microscope/camera image to pre-operative patient data in order to aid the surgeon in understanding the topology, the location and type of vessel lying below the surface of the patient. This may reduce surgical time and increasing surgical precision. In this project as well as studying a mixed reality environment for neurovascular surgery, we will examine and evaluate which visualization techniques provide the best spatial and depth understanding of the vessels beyond the visible surface.

Figure: A: Colour coding of a vascular DS-CTA volume based on blood flow. B: Vessels overlaid on the patient skin prior to draping (left). C: Different visualization techniques for combining the live camera image (prior to resection) with the virtual vessels (green, red, blue) are shown. D: Based on the virtual information the surgeon placed a micropad on the brain surface above a virtual marker.

Publications

- Kersten-Oertel, M., Gerard, I. J., Drouin, S., Mok, K., Sirhan, D., Sinclair, D. S. and Collins, D. L. Augmented reality in neurovascular surgery: feasibility and first uses in the operating room. IJCARS (2015): 1–14.

- Kersten-Oertel, M., Gerard, I. J., Drouin, S., Mok, K., Sirhan, D., Sinclair, D. S., & Collins, D. L. (2015). Augmented Reality for Specific Neurovascular Surgical Tasks. In Augmented Environments for Computer-Assisted Interventions (pp. 92–103). Springer International Publishing.

- Kersten-Oertel, M., Gerard, I. J., Drouin, S., Mok, K., Sirhan, D., Sinclair, D., Collins, D. L. “Augmented Reality in Neurovascular Surgery: First Experiences.” Augmented Environments for Computer-Assisted Interventions. Lecture Notes in Computer Science Volume 8678, 2014, pp 80–89, 2014.

Augmented Reality in Brain Tumour Surgery

Augmented reality (AR) visualization in image-guided neurosurgery (IGNS) allows a surgeon to see rendered preoperative medical datasets (e.g. MRI/CT) from a navigation system merged with the surgical field of view. Combining the real surgical scene with the virtual anatomical models into a comprehensive visualization has the potential of reducing the cognitive burden of the surgeon by removing the need to map preoperative images and surgical plans from the navigation system to the patient. Furthermore, it allows the surgeon to see beyond the visible surface of the patient, directly at the anatomy of interest, which may not be readily visible.

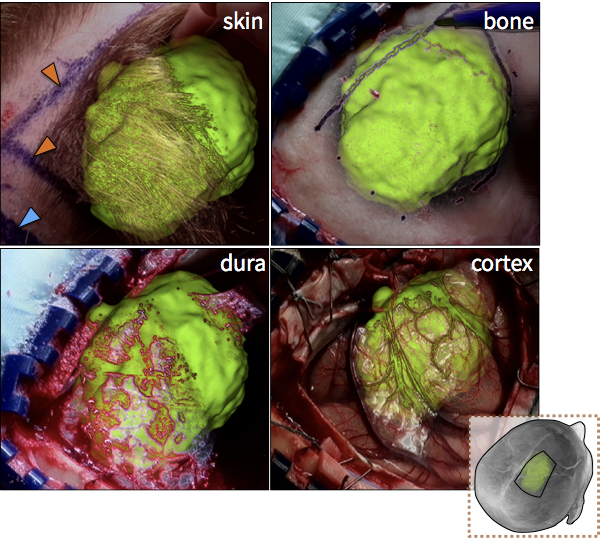

Figure: Augmented reality visualizations from our neuronavigation system. The surgeon used AR for craniotomy planning on the skin (A), the bone (B), the dura (C), and also after the craniotomy on the cortex (D). In A, the orange arrow indicated the posterior boundary of the tumour and the blue arrow indicates the planned posterior boundary of the craniotomy that will allow access to the tumour. The yellow arrow shows the medial extent of the tumour, which is also the planned craniotomy margin. In B, the surgeon uses the augmented reality view to trace around the tumour in order to determine the size of the bone flap to be removed. In C, AR is used prior to the opening of the dura and in D the tumour is visualized on the cortex prior to its resection.

More recently, this AR view has been ported to a mobile device, through the development of the MARIN (Mobile Augmented Reality Interactive Neuronavigator) system. This system enables the surgeon not only to visualize structures below the surface, but as well interact with the presented data in real-time during surgery. This system was shown to enable shorter task completion time and higher accuracy than the standard guidance systems.

Video

Publications

- Léger, É., Reyes, J., Drouin, S., Popa, T., Hall, J. A., Collins, D. L., Kersten-Oertel, M. (2020). MARIN: an Open Source Mobile Augmented Reality Interactive Neuronavigation System, International Journal of Computer Assisted Radiology and Surgery. https://doi.org/10.1007/s11548-020-02155-6.

- Kersten-Oertel, M., Gerard, I. J., Drouin, S., Hall, J. A., Collins, D. L. “Intraoperative Craniotomy Planning for Brain Tumour Surgery using Augmented Reality”, to be presented at CARS 2016.

- I. J. Gerard, M. Kersten-Oertel, S. Drouin, J. A. Hall, K. Petrecca, D. De Nigris, T. Arbel and D. L. Collins. (2016) “Improving Patient Specific Neurosurgical Models with Intraoperative Ultrasound and Augmented Reality Visualizations in a Neuronavigation Environment,” in 4th Workshop on Clinical Image-based Procedures: Translational Research in Medical Imaging, LNCS 9401, pp. 1–8.*** Best Paper

- Kersten-Oertel, M., Gerard, I. J., Drouin, S., Mok, K., Petrecca, K., & Collins, D. L. (2015) Augmented Reality for Brain Tumour Resections. Int J CARS, 10(1):S260.